Merge arch3rPro/1Panel-Appstore into localApps

|

|

@ -0,0 +1,16 @@

|

|||

# AFFiNE

|

||||

**AFFiNE** is an open-source integrated workspace and operating system for building knowledge bases and more—wiki, knowledge management, presentations, and digital assets. It's a better alternative to Notion and Miro.

|

||||

|

||||

## Key Features:

|

||||

### True canvas for blocks of any form, documents and whiteboards now fully merged.

|

||||

- Many editor apps claim to be productivity canvases, but AFFiNE is one of the very few apps that allows you to place any building block on an infinite canvas—rich text, sticky notes, embedded webpages, multi-view databases, linked pages, shapes, and even slideshows. We have it all.

|

||||

|

||||

### Multi-mode AI partner ready for any task

|

||||

- Writing professional work reports? Turning outlines into expressive and easy-to-present slideshows? Summarizing articles into well-structured mind maps? Organizing work plans and to-dos? Or... drawing and writing prototype applications and webpages with just a prompt? With you, AFFiNE AI can push your creativity to the edge of imagination.

|

||||

|

||||

### Local-first, real-time collaboration

|

||||

- We love the local-first concept, meaning you always own your data on disk, regardless of cloud usage. Additionally, AFFiNE supports real-time synchronization and collaboration on the web and across platform clients.

|

||||

|

||||

### Self-host and shape your own AFFiNE

|

||||

- You're free to manage, self-host, fork, and build your own AFFiNE. Plugin communities and third-party modules are coming soon. Blocksuite has even more traction. Check it out to learn how to self-host AFFiNE.

|

||||

|

||||

|

|

@ -0,0 +1,70 @@

|

|||

additionalProperties:

|

||||

formFields:

|

||||

- default: 3010

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelEn: Web Port

|

||||

labelZh: HTTP 端口

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

label:

|

||||

en: Web Port

|

||||

zh: HTTP 端口

|

||||

- default: affine

|

||||

envKey: DB_DATABASE

|

||||

labelEn: Database

|

||||

labelZh: 数据库名

|

||||

required: true

|

||||

rule: paramCommon

|

||||

type: text

|

||||

label:

|

||||

en: Database

|

||||

zh: 数据库名

|

||||

- default: affine

|

||||

envKey: DB_USERNAME

|

||||

labelEn: User

|

||||

labelZh: 数据库用户

|

||||

random: true

|

||||

required: true

|

||||

rule: paramCommon

|

||||

type: text

|

||||

label:

|

||||

en: User

|

||||

zh: 数据库用户

|

||||

- default: affine

|

||||

envKey: DB_PASSWORD

|

||||

labelEn: Password

|

||||

labelZh: 数据库用户密码

|

||||

random: true

|

||||

required: true

|

||||

type: password

|

||||

label:

|

||||

en: Password

|

||||

zh: 数据库用户密码

|

||||

- default: ~/.affine/self-host/storage

|

||||

envKey: UPLOAD_LOCATION

|

||||

labelEn: Upload Location

|

||||

labelZh: 上传目录

|

||||

required: true

|

||||

type: text

|

||||

label:

|

||||

en: Upload Location

|

||||

zh: 上传目录

|

||||

- default: ~/.affine/self-host/storage

|

||||

envKey: CONFIG_LOCATION

|

||||

labelEn: Config Location

|

||||

labelZh: 配置目录

|

||||

required: true

|

||||

type: text

|

||||

label:

|

||||

en: Config Location

|

||||

zh: 配置目录

|

||||

- default: ~/.affine/self-host/postgres/pgdata

|

||||

envKey: DB_DATA_LOCATION

|

||||

labelEn: Postgre Data Location

|

||||

labelZh: Postgre 数据目录

|

||||

required: true

|

||||

type: text

|

||||

label:

|

||||

en: Postgre Data Location

|

||||

zh: Postgre 数据目录

|

||||

|

|

@ -0,0 +1,89 @@

|

|||

services:

|

||||

affine:

|

||||

image: ghcr.io/toeverything/affine-graphql:stable

|

||||

container_name: ${CONTAINER_NAME}

|

||||

ports:

|

||||

- ${PANEL_APP_PORT_HTTP}:3010

|

||||

depends_on:

|

||||

redis:

|

||||

condition: service_healthy

|

||||

postgres:

|

||||

condition: service_healthy

|

||||

affine_migration:

|

||||

condition: service_completed_successfully

|

||||

volumes:

|

||||

# custom configurations

|

||||

- ${UPLOAD_LOCATION}:/root/.affine/storage

|

||||

- ${CONFIG_LOCATION}:/root/.affine/config

|

||||

environment:

|

||||

- REDIS_SERVER_HOST=redis

|

||||

- DATABASE_URL=postgresql://${DB_USERNAME}:${DB_PASSWORD}@postgres:5432/${DB_DATABASE:-affine}

|

||||

- AFFINE_INDEXER_ENABLED=false

|

||||

networks:

|

||||

- 1panel-network

|

||||

restart: always

|

||||

labels:

|

||||

createdBy: Apps

|

||||

affine_migration:

|

||||

image: ghcr.io/toeverything/affine-graphql:stable

|

||||

container_name: ${CONTAINER_NAME}_migration_job

|

||||

volumes:

|

||||

# custom configurations

|

||||

- ${UPLOAD_LOCATION}:/root/.affine/storage

|

||||

- ${CONFIG_LOCATION}:/root/.affine/config

|

||||

command: ['sh', '-c', 'node ./scripts/self-host-predeploy.js']

|

||||

networks:

|

||||

- 1panel-network

|

||||

environment:

|

||||

- REDIS_SERVER_HOST=redis

|

||||

- DATABASE_URL=postgresql://${DB_USERNAME}:${DB_PASSWORD}@postgres:5432/${DB_DATABASE:-affine}

|

||||

- AFFINE_INDEXER_ENABLED=false

|

||||

depends_on:

|

||||

postgres:

|

||||

condition: service_healthy

|

||||

redis:

|

||||

condition: service_healthy

|

||||

labels:

|

||||

createdBy: Apps

|

||||

restart: no

|

||||

redis:

|

||||

image: redis

|

||||

container_name: ${CONTAINER_NAME}_redis

|

||||

healthcheck:

|

||||

test: ['CMD', 'redis-cli', '--raw', 'incr', 'ping']

|

||||

interval: 10s

|

||||

timeout: 5s

|

||||

retries: 5

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: Apps

|

||||

restart: always

|

||||

|

||||

postgres:

|

||||

image: pgvector/pgvector:pg16

|

||||

container_name: ${CONTAINER_NAME}_postgres

|

||||

volumes:

|

||||

- ${DB_DATA_LOCATION}:/var/lib/postgresql/data

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: Apps

|

||||

environment:

|

||||

POSTGRES_USER: ${DB_USERNAME}

|

||||

POSTGRES_PASSWORD: ${DB_PASSWORD}

|

||||

POSTGRES_DB: ${DB_DATABASE:-affine}

|

||||

POSTGRES_INITDB_ARGS: '--data-checksums'

|

||||

# you better set a password for you database

|

||||

# or you may add 'POSTGRES_HOST_AUTH_METHOD=trust' to ignore postgres security policy

|

||||

POSTGRES_HOST_AUTH_METHOD: trust

|

||||

healthcheck:

|

||||

test:

|

||||

['CMD', 'pg_isready', '-U', "${DB_USERNAME}", '-d', "${DB_DATABASE:-affine}"]

|

||||

interval: 10s

|

||||

timeout: 5s

|

||||

retries: 5

|

||||

restart: always

|

||||

networks:

|

||||

1panel-network:

|

||||

external: true

|

||||

|

|

@ -0,0 +1,11 @@

|

|||

CONTAINER_NAME="blinko"

|

||||

NEXTAUTH_SECRET="my_ultra_secure_nextauth_secret"

|

||||

NEXTAUTH_URL="http://1.2.3.4:1111"

|

||||

NEXT_PUBLIC_BASE_URL="http://1.2.3.4:1111"

|

||||

PANEL_APP_PORT_HTTP=1111

|

||||

PANEL_DB_HOST="postgresql"

|

||||

PANEL_DB_HOST_NAME="postgresql"

|

||||

PANEL_DB_NAME="blinko"

|

||||

PANEL_DB_PORT=5432

|

||||

PANEL_DB_USER="blinko"

|

||||

PANEL_DB_USER_PASSWORD="blinko"

|

||||

|

|

@ -0,0 +1,70 @@

|

|||

additionalProperties:

|

||||

formFields:

|

||||

- default: "1111"

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelEn: HTTP Port

|

||||

labelZh: HTTP 端口

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

- default: "http://1.2.3.4:1111"

|

||||

envKey: NEXTAUTH_URL

|

||||

labelEn: NextAuth URL

|

||||

labelZh: 基本 URL

|

||||

required: true

|

||||

rule: paramExtUrl

|

||||

type: text

|

||||

- default: "http://1.2.3.4:1111"

|

||||

envKey: NEXT_PUBLIC_BASE_URL

|

||||

labelEn: Next Public Base URL

|

||||

labelZh: 公共基本 URL

|

||||

required: true

|

||||

rule: paramExtUrl

|

||||

type: text

|

||||

- default: "my_ultra_secure_nextauth_secret"

|

||||

envKey: NEXTAUTH_SECRET

|

||||

labelEn: NextAuth Secret

|

||||

labelZh: NextAuth 密钥

|

||||

random: true

|

||||

required: true

|

||||

rule: paramComplexity

|

||||

type: password

|

||||

- default: ""

|

||||

envKey: PANEL_DB_HOST

|

||||

key: postgresql

|

||||

labelEn: PostgreSQL Database Service

|

||||

labelZh: PostgreSQL 数据库服务

|

||||

required: true

|

||||

type: service

|

||||

- default: "5432"

|

||||

edit: true

|

||||

envKey: PANEL_DB_PORT

|

||||

labelEn: Database Port Number

|

||||

labelZh: 数据库端口号

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

- default: "blinko"

|

||||

envKey: PANEL_DB_NAME

|

||||

labelEn: Database

|

||||

labelZh: 数据库名

|

||||

random: true

|

||||

required: true

|

||||

rule: paramCommon

|

||||

type: text

|

||||

- default: "blinko"

|

||||

envKey: PANEL_DB_USER

|

||||

labelEn: User

|

||||

labelZh: 数据库用户

|

||||

random: true

|

||||

required: true

|

||||

rule: paramCommon

|

||||

type: text

|

||||

- default: "blinko"

|

||||

envKey: PANEL_DB_USER_PASSWORD

|

||||

labelEn: Password

|

||||

labelZh: 数据库用户密码

|

||||

random: true

|

||||

required: true

|

||||

rule: paramComplexity

|

||||

type: password

|

||||

|

|

@ -0,0 +1,35 @@

|

|||

services:

|

||||

blinko:

|

||||

image: "blinkospace/blinko:1.0.3"

|

||||

container_name: ${CONTAINER_NAME}

|

||||

environment:

|

||||

NODE_ENV: production

|

||||

NEXTAUTH_URL: ${NEXTAUTH_URL}

|

||||

NEXT_PUBLIC_BASE_URL: ${NEXT_PUBLIC_BASE_URL}

|

||||

NEXTAUTH_SECRET: ${NEXTAUTH_SECRET}

|

||||

DATABASE_URL: postgresql://${PANEL_DB_USER}:${PANEL_DB_USER_PASSWORD}@${PANEL_DB_HOST}:${PANEL_DB_PORT}/${PANEL_DB_NAME}

|

||||

depends_on:

|

||||

postgres:

|

||||

condition: service_healthy

|

||||

volumes:

|

||||

- "./data:/app/.blinko"

|

||||

restart: always

|

||||

logging:

|

||||

options:

|

||||

max-size: "10m"

|

||||

max-file: "3"

|

||||

ports:

|

||||

- "${PANEL_APP_PORT_HTTP}:1111"

|

||||

healthcheck:

|

||||

test: ["CMD", "curl", "-f", "http://localhost:1111/"]

|

||||

interval: 30s

|

||||

timeout: 10s

|

||||

retries: 5

|

||||

start_period: 30s

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

networks:

|

||||

1panel-network:

|

||||

external: true

|

||||

|

|

@ -0,0 +1,24 @@

|

|||

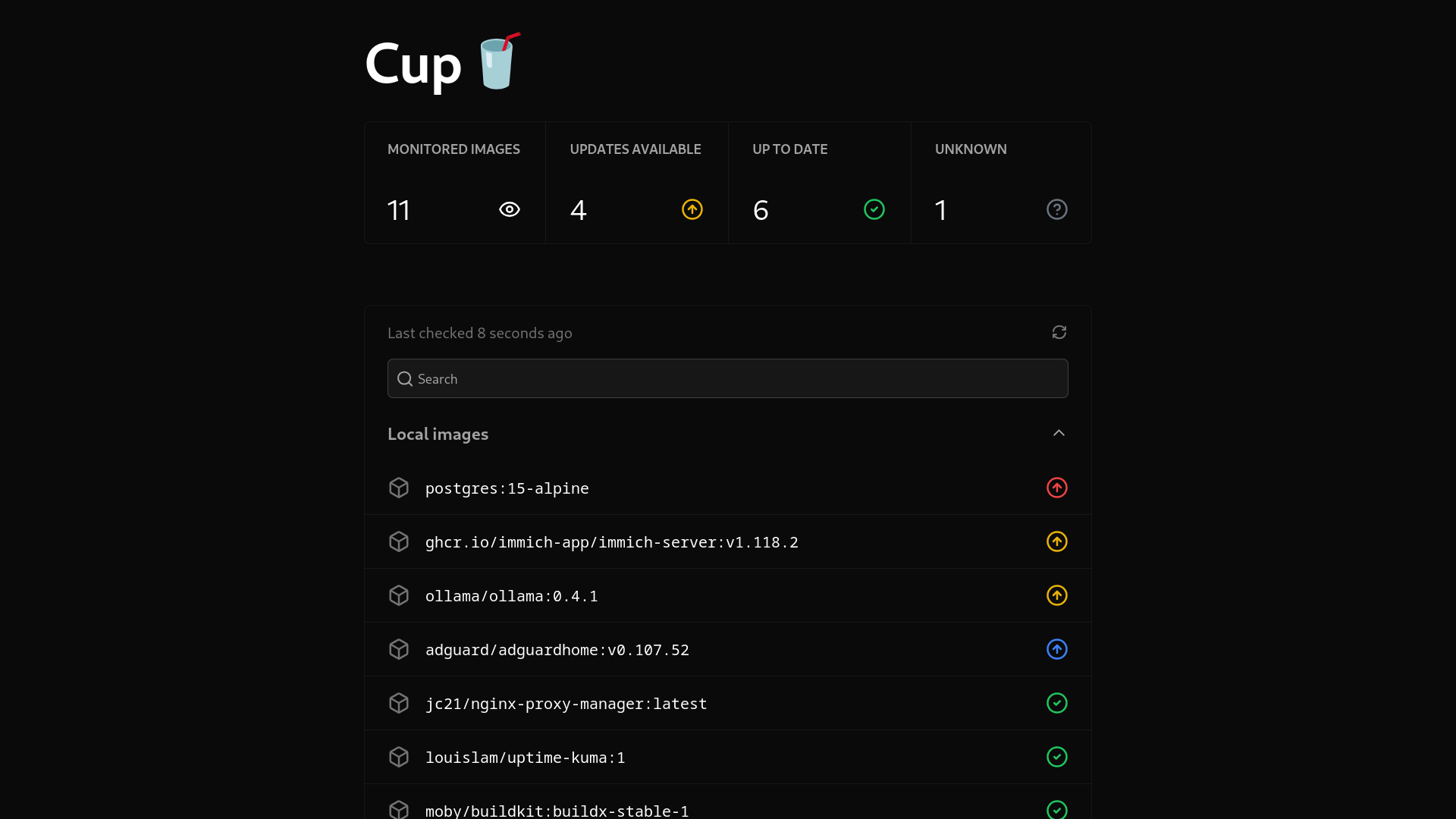

# cup

|

||||

|

||||

Cup 是检查容器镜像更新最简单的方法.

|

||||

|

||||

|

||||

|

||||

### 特色✨

|

||||

- 速度超快。Cup 充分利用了您的 CPU 资源,并经过高度优化,带来闪电般的速度。在我的 Raspberry Pi 5 上,58 张图片的读取仅用了 3.7 秒!

|

||||

- 支持大多数注册中心,包括 Docker Hub、ghcr.io、Quay、lscr.io 甚至 Gitea(或衍生产品)

|

||||

- 不会耗尽任何速率限制。这正是我创建 Cup 的初衷。我觉得这个功能现在尤其重要,因为Docker Hub 正在降低未经身份验证用户的拉取限制。

|

||||

- 漂亮的 CLI 和 Web 界面,可随时检查您的容器。

|

||||

- 二进制文件非常小巧!撰写本文时,它只有 5.4 MB。无需再为如此简单的程序拉取 100 多 MB 的 Docker 镜像。

|

||||

- CLI 和 Web 界面均提供 JSON 输出,方便您将 Cup 连接到集成。它易于解析,并且可以轻松设置 Webhook 和美观的仪表板!

|

||||

|

||||

### 文档📘

|

||||

看看https://cup.sergi0g.dev/docs!

|

||||

|

||||

### 限制

|

||||

|

||||

```

|

||||

Cup 仍在开发中。它可能没有其他替代品那么多功能。如果其中某个功能对您来说非常重要,请考虑使用其他工具。

|

||||

|

||||

Cup 无法直接触发您的集成。如果您希望自动触发,请使用 What's up Docker。Cup 的设计初衷很简单。数据就在那里,您可以自行检索(例如,通过cup check -rcronjob 运行或定期/api/v3/json从服务器请求 URL)。

|

||||

```

|

||||

|

|

@ -0,0 +1,25 @@

|

|||

name: cup

|

||||

tags:

|

||||

- 实用工具

|

||||

title: 自动检测 Docker 容器基础镜像的工具

|

||||

description: 自动检测 Docker 容器基础镜像的工具

|

||||

additionalProperties:

|

||||

key: cup

|

||||

name: cup

|

||||

tags:

|

||||

- Tool

|

||||

shortDescZh: 自动检测 Docker 容器基础镜像的工具

|

||||

shortDescEn: Docker container updates made easy

|

||||

type: website

|

||||

crossVersionUpdate: true

|

||||

limit: 1

|

||||

recommend: 0

|

||||

website: https://cup.sergi0g.dev/

|

||||

github: https://github.com/sergi0g/cup

|

||||

document: https://cup.sergi0g.dev/docs

|

||||

description:

|

||||

en: Docker container updates made easy

|

||||

zh: 自动检测 Docker 容器基础镜像的工具

|

||||

architectures:

|

||||

- amd64

|

||||

- arm64

|

||||

|

|

@ -0,0 +1,10 @@

|

|||

additionalProperties:

|

||||

formFields:

|

||||

- default: "51230"

|

||||

edit: true

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelEn: Service Port 8000

|

||||

labelZh: 服务端口 8000

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

|

|

@ -0,0 +1,17 @@

|

|||

services:

|

||||

cup:

|

||||

image: ghcr.io/sergi0g/cup:latest

|

||||

container_name: ${CONTAINER_NAME}

|

||||

restart: unless-stopped

|

||||

ports:

|

||||

- ${PANEL_APP_PORT_HTTP}:8000

|

||||

volumes:

|

||||

- /var/run/docker.sock:/var/run/docker.sock

|

||||

networks:

|

||||

- 1panel-network

|

||||

labels:

|

||||

createdBy: Apps

|

||||

command: serve

|

||||

networks:

|

||||

1panel-network:

|

||||

external: true

|

||||

|

After Width: | Height: | Size: 2.9 KiB |

|

|

@ -0,0 +1,31 @@

|

|||

### 工具介绍

|

||||

|

||||

🚀 DeepSeek-V3 & R1大模型逆向API【特长:良心厂商】(官方贼便宜,建议直接走官方),支持高速流式输出、多轮对话,联网搜索,R1深度思考,零配置部署,多路token支持,仅供测试,如需商用请前往官方开放平台。

|

||||

|

||||

### 风险说明

|

||||

|

||||

- 逆向API是不稳定的,建议前往DeepSeek官方 https://platform.deepseek.com/ 付费使用API,避免封禁的风险。

|

||||

|

||||

- 本组织和个人不接受任何资金捐助和交易,此项目是纯粹研究交流学习性质!

|

||||

|

||||

- 仅限自用,禁止对外提供服务或商用,避免对官方造成服务压力,否则风险自担!

|

||||

|

||||

### 使用说明

|

||||

|

||||

请确保您在中国境内或者拥有中国境内的个人计算设备,否则部署后可能因无法访问DeepSeek而无法使用。

|

||||

|

||||

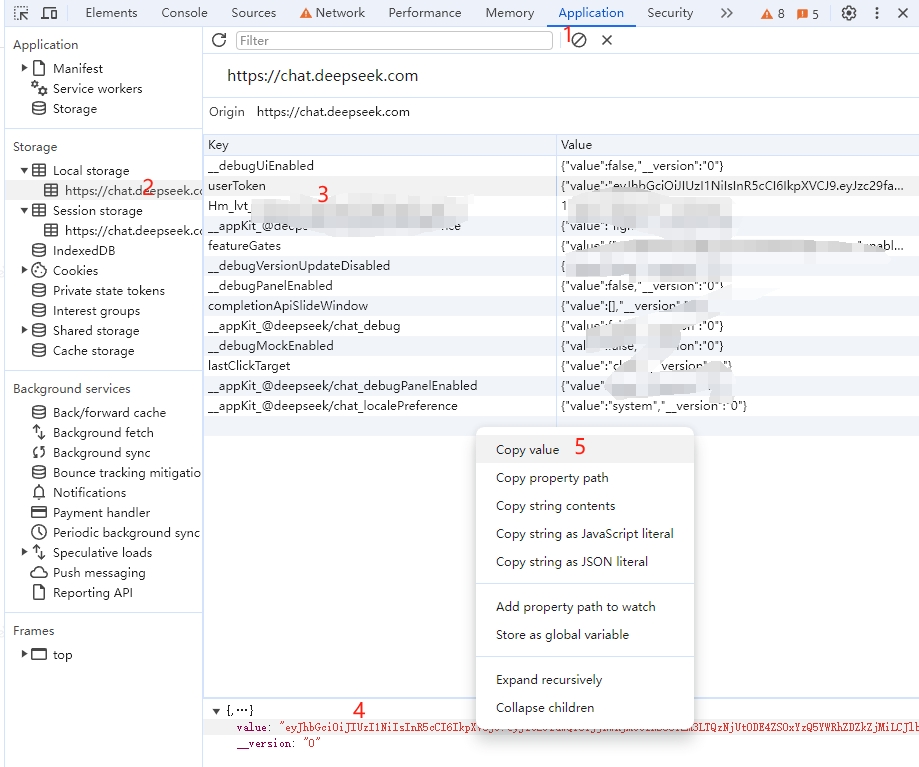

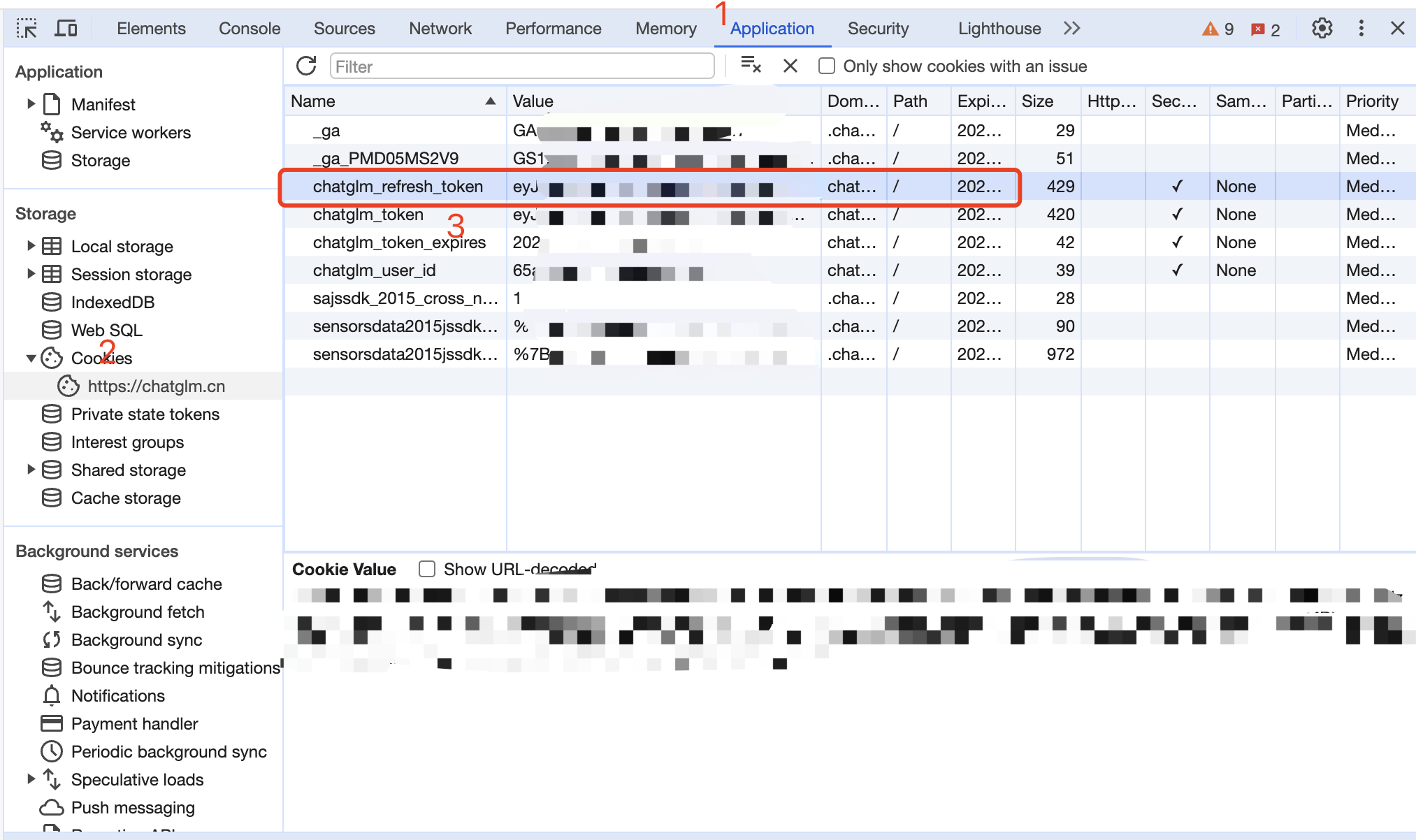

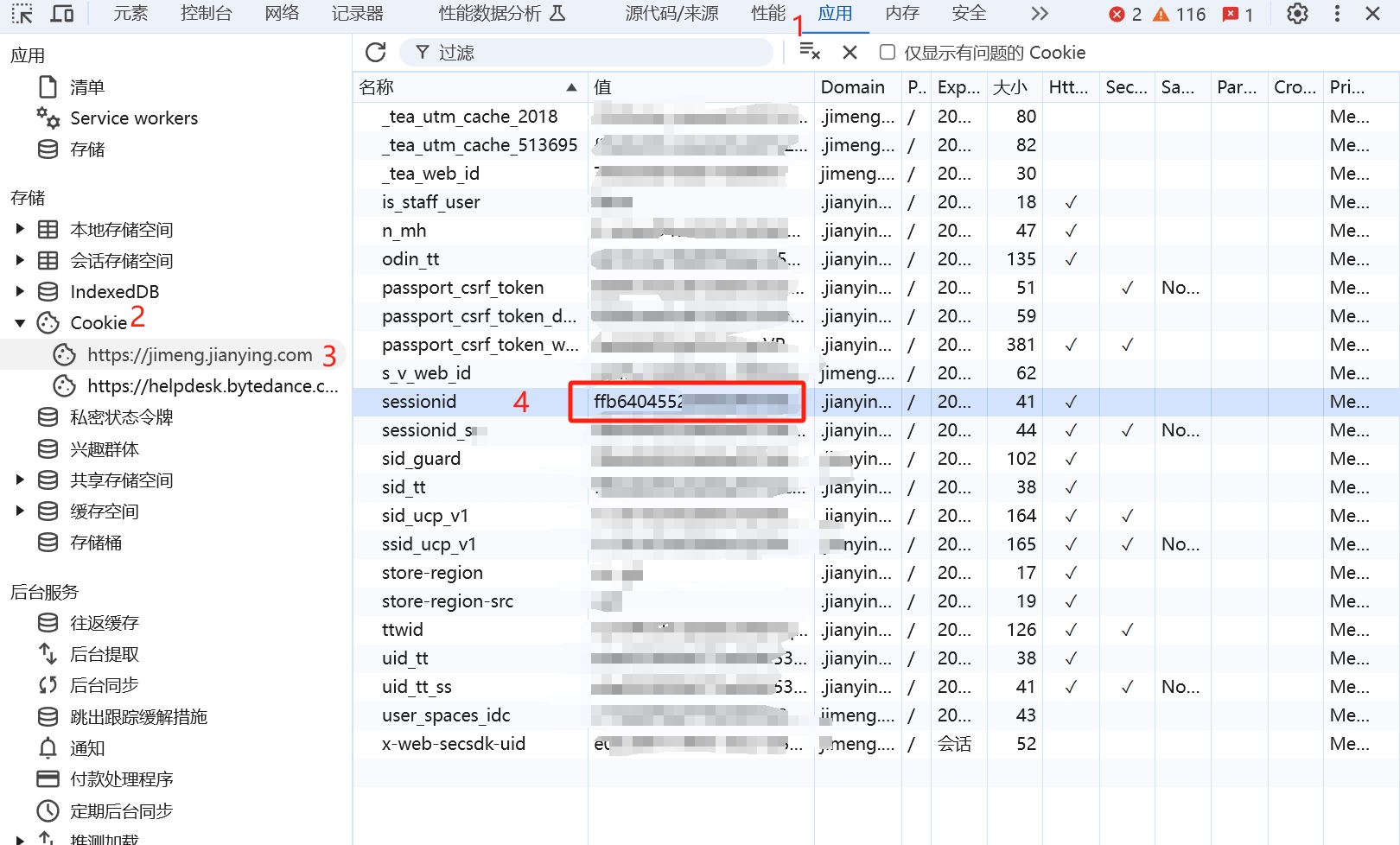

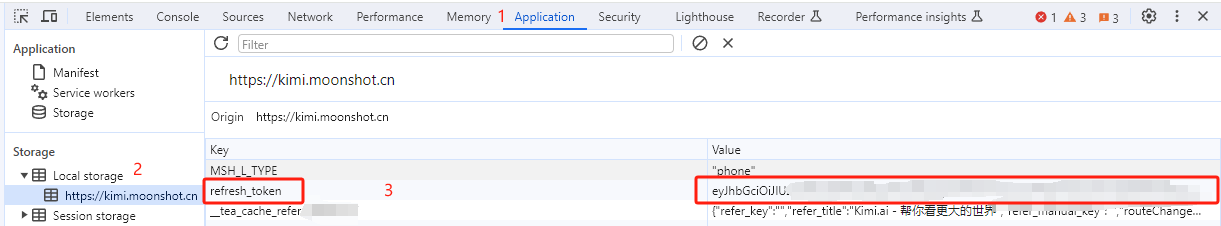

从 [DeepSeek](https://chat.deepseek.com/) 获取userToken value

|

||||

|

||||

进入DeepSeek随便发起一个对话,然后F12打开开发者工具,从Application > LocalStorage中找到`userToken`中的value值,复制这个值填写到Lobechat或者CherryStudio等工具中,作为API密钥,API地址是你部署应用的IP加端口,例如:`https://192.168.1.105:8001/v1/chat/completions`,注意某些工具只需要填写`https://192.168.1.105:8001/`即可。

|

||||

|

||||

[](https://cdn.jsdelivr.net/gh/LLM-Red-Team/deepseek-free-api@master/doc/example-0.png)

|

||||

|

||||

### 多账号接入

|

||||

|

||||

目前同个账号同时只能有*一路*输出,你可以通过提供多个账号的userToken value并使用`,`拼接提供:

|

||||

|

||||

```

|

||||

API密钥:TOKEN1,TOKEN2,TOKEN3

|

||||

```

|

||||

|

||||

每次请求服务会从中挑选一个。

|

||||

|

|

@ -0,0 +1,24 @@

|

|||

name: Deepseek-Free-API

|

||||

tags:

|

||||

- AI / 大模型

|

||||

title: DeepSeek-V3 & R1大模型逆向API

|

||||

description: DeepSeek V3 Free 服务

|

||||

additionalProperties:

|

||||

key: deepseek-free-api

|

||||

name: Deepseek-Free-API

|

||||

tags:

|

||||

- AI

|

||||

- Tools

|

||||

shortDescZh: DeepSeek-V3 & R1大模型逆向API

|

||||

shortDescEn: A 1Panel deployment for deepseek-free-api

|

||||

type: website

|

||||

crossVersionUpdate: true

|

||||

limit: 0

|

||||

recommend: 0

|

||||

architectures:

|

||||

- amd64

|

||||

- arm64

|

||||

|

||||

website: https://platform.deepseek.com/

|

||||

github: https://github.com/LLM-Red-Team/deepseek-free-api

|

||||

document: https://github.com/LLM-Red-Team/deepseek-free-api

|

||||

|

|

@ -0,0 +1,10 @@

|

|||

additionalProperties:

|

||||

formFields:

|

||||

- default: "8001"

|

||||

edit: true

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelEn: Service Port

|

||||

labelZh: 服务端口

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

|

|

@ -0,0 +1,16 @@

|

|||

services:

|

||||

deepseek-free-api:

|

||||

image: vinlic/deepseek-free-api:latest

|

||||

container_name: ${CONTAINER_NAME}

|

||||

ports:

|

||||

- ${PANEL_APP_PORT_HTTP}:8000

|

||||

networks:

|

||||

- 1panel-network

|

||||

environment:

|

||||

- TZ=Asia/Shanghai

|

||||

labels:

|

||||

createdBy: Apps

|

||||

restart: always

|

||||

networks:

|

||||

1panel-network:

|

||||

external: true

|

||||

|

After Width: | Height: | Size: 38 KiB |

|

|

@ -0,0 +1,13 @@

|

|||

# 1Panel Apps

|

||||

|

||||

这是一款适配 1Panel 应用商店的通用应用模板,

|

||||

|

||||

旨在简化 Docker 应用的快速适配过程,让用户轻松将所需应用集成至 1Panel 应用商店。

|

||||

|

||||

它能够有效解决非商店应用无法使用 1Panel 快照和应用备份功能的问题。

|

||||

|

||||

## 使用说明

|

||||

|

||||

- 可以按需修改安装界面的参数

|

||||

|

||||

- 也可以直接忽视安装界面提供的参数,然后勾选`“高级设置”`,勾选`“编辑compose文件”`,使用自定义的 `docker-compose.yml`文件

|

||||

|

|

@ -0,0 +1,9 @@

|

|||

CONTAINER_NAME="1panel-apps"

|

||||

DATA_PATH="./data"

|

||||

DATA_PATH_INTERNAL="/data"

|

||||

ENV1=""

|

||||

IMAGE=""

|

||||

PANEL_APP_PORT_HTTP=40329

|

||||

PANEL_APP_PORT_HTTP_INTERNAL=40329

|

||||

RESTART_POLICY="always"

|

||||

TIME_ZONE="Asia/Shanghai"

|

||||

|

|

@ -0,0 +1,69 @@

|

|||

additionalProperties:

|

||||

formFields:

|

||||

- default: ""

|

||||

edit: true

|

||||

envKey: IMAGE

|

||||

labelEn: Docker Image

|

||||

labelZh: Docker 镜像

|

||||

required: true

|

||||

type: text

|

||||

- default: "always"

|

||||

edit: true

|

||||

envKey: RESTART_POLICY

|

||||

labelEn: Restart Policy

|

||||

labelZh: 重启策略

|

||||

required: true

|

||||

type: select

|

||||

values:

|

||||

- label: "Always"

|

||||

value: "always"

|

||||

- label: "Unless Stopped"

|

||||

value: "unless-stopped"

|

||||

- label: "On Failure"

|

||||

value: "on-failure"

|

||||

- label: "No"

|

||||

value: "no"

|

||||

- default: "40329"

|

||||

edit: true

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelEn: Port

|

||||

labelZh: 端口

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

- default: "40329"

|

||||

edit: true

|

||||

envKey: PANEL_APP_PORT_HTTP_INTERNAL

|

||||

labelEn: Internal Port

|

||||

labelZh: 内部端口

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

- default: "./data"

|

||||

edit: true

|

||||

envKey: DATA_PATH

|

||||

labelEn: Data Path

|

||||

labelZh: 数据路径

|

||||

required: true

|

||||

type: text

|

||||

- default: "/data"

|

||||

edit: true

|

||||

envKey: DATA_PATH_INTERNAL

|

||||

labelEn: Internal Data Path

|

||||

labelZh: 内部数据路径

|

||||

required: true

|

||||

type: text

|

||||

- default: "Asia/Shanghai"

|

||||

edit: true

|

||||

envKey: TIME_ZONE

|

||||

labelEn: Time Zone

|

||||

labelZh: 时区

|

||||

required: true

|

||||

type: text

|

||||

- default: ""

|

||||

edit: true

|

||||

envKey: ENV1

|

||||

labelEn: Environment Variable 1 (Edit to remove comments in compose.yml to take effect)

|

||||

labelZh: 环境变量 1 (编辑去除compose.yml里的注释生效)

|

||||

required: false

|

||||

type: text

|

||||

|

|

@ -0,0 +1,22 @@

|

|||

services:

|

||||

1panel-apps:

|

||||

image: ${IMAGE}

|

||||

container_name: ${CONTAINER_NAME}

|

||||

restart: ${RESTART_POLICY}

|

||||

networks:

|

||||

- 1panel-network

|

||||

ports:

|

||||

- "${PANEL_APP_PORT_HTTP}:${PANEL_APP_PORT_HTTP_INTERNAL}"

|

||||

volumes:

|

||||

- "${DATA_PATH}:${DATA_PATH_INTERNAL}"

|

||||

environment:

|

||||

# 环境参数按需修改 (Modify the environment parameters as required)

|

||||

- TZ=${TIME_ZONE}

|

||||

# 删除以下行前的#号表示启用 (Delete the # sign in front of the following lines to indicate enablement)

|

||||

# - ${ENV1}=${ENV1}

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

|

||||

networks:

|

||||

1panel-network:

|

||||

external: true

|

||||

|

|

@ -0,0 +1,19 @@

|

|||

name: 1Panel Apps

|

||||

tags:

|

||||

- 建站

|

||||

title: 适配 1Panel 应用商店的通用应用模板

|

||||

description: 适配 1Panel 应用商店的通用应用模板

|

||||

additionalProperties:

|

||||

key: 1panel-apps

|

||||

name: 1Panel Apps

|

||||

tags:

|

||||

- Website

|

||||

shortDescZh: 适配 1Panel 应用商店的通用应用模板

|

||||

shortDescEn: Universal app template for the 1Panel App Store

|

||||

type: website

|

||||

crossVersionUpdate: true

|

||||

limit: 0

|

||||

recommend: 0

|

||||

website: https://github.com/okxlin/appstore

|

||||

github: https://github.com/okxlin/appstore

|

||||

document: https://github.com/okxlin/appstore

|

||||

|

|

@ -0,0 +1,8 @@

|

|||

CONTAINER_NAME="1panel-apps"

|

||||

DATA_PATH="./data"

|

||||

DATA_PATH_INTERNAL="/data"

|

||||

ENV1=""

|

||||

IMAGE=""

|

||||

PANEL_APP_PORT_HTTP=40329

|

||||

RESTART_POLICY="always"

|

||||

TIME_ZONE="Asia/Shanghai"

|

||||

|

|

@ -0,0 +1,61 @@

|

|||

additionalProperties:

|

||||

formFields:

|

||||

- default: ""

|

||||

edit: true

|

||||

envKey: IMAGE

|

||||

labelEn: Docker Image

|

||||

labelZh: Docker 镜像

|

||||

required: true

|

||||

type: text

|

||||

- default: "always"

|

||||

edit: true

|

||||

envKey: RESTART_POLICY

|

||||

labelEn: Restart Policy

|

||||

labelZh: 重启策略

|

||||

required: true

|

||||

type: select

|

||||

values:

|

||||

- label: "Always"

|

||||

value: "always"

|

||||

- label: "Unless Stopped"

|

||||

value: "unless-stopped"

|

||||

- label: "On Failure"

|

||||

value: "on-failure"

|

||||

- label: "No"

|

||||

value: "no"

|

||||

- default: "40329"

|

||||

edit: true

|

||||

envKey: PANEL_APP_PORT_HTTP

|

||||

labelEn: Port (determined by the Docker application itself)

|

||||

labelZh: 端口 (由 Docker 应用自身决定)

|

||||

required: true

|

||||

rule: paramPort

|

||||

type: number

|

||||

- default: "./data"

|

||||

edit: true

|

||||

envKey: DATA_PATH

|

||||

labelEn: Data Path

|

||||

labelZh: 数据路径

|

||||

required: true

|

||||

type: text

|

||||

- default: "/data"

|

||||

edit: true

|

||||

envKey: DATA_PATH_INTERNAL

|

||||

labelEn: Internal Data Path

|

||||

labelZh: 内部数据路径

|

||||

required: true

|

||||

type: text

|

||||

- default: "Asia/Shanghai"

|

||||

edit: true

|

||||

envKey: TIME_ZONE

|

||||

labelEn: Time Zone

|

||||

labelZh: 时区

|

||||

required: true

|

||||

type: text

|

||||

- default: ""

|

||||

edit: true

|

||||

envKey: ENV1

|

||||

labelEn: Environment Variable 1 (Edit to remove comments in compose.yml to take effect)

|

||||

labelZh: 环境变量 1 (编辑去除compose.yml里的注释生效)

|

||||

required: false

|

||||

type: text

|

||||

|

|

@ -0,0 +1,15 @@

|

|||

services:

|

||||

1panel-apps:

|

||||

image: ${IMAGE}

|

||||

container_name: ${CONTAINER_NAME}

|

||||

restart: ${RESTART_POLICY}

|

||||

network_mode: host

|

||||

volumes:

|

||||

- "${DATA_PATH}:${DATA_PATH_INTERNAL}"

|

||||

environment:

|

||||

# 环境参数按需修改 (Modify the environment parameters as required)

|

||||

- TZ=${TIME_ZONE}

|

||||

# 删除以下行前的#号表示启用 (Delete the # sign in front of the following lines to indicate enablement)

|

||||

# - ${ENV1}=${ENV1}

|

||||

labels:

|

||||

createdBy: "Apps"

|

||||

|

After Width: | Height: | Size: 4.7 KiB |

|

|

@ -0,0 +1,76 @@

|

|||

# Launching new servers with SSL certificates

|

||||

|

||||

## Short description

|

||||

|

||||

docker compose certbot configurations with Backward compatibility (without certbot container).

|

||||

Use `docker compose --profile certbot up` to use this features.

|

||||

|

||||

## The simplest way for launching new servers with SSL certificates

|

||||

|

||||

1. Get letsencrypt certs

|

||||

set `.env` values

|

||||

```properties

|

||||

NGINX_SSL_CERT_FILENAME=fullchain.pem

|

||||

NGINX_SSL_CERT_KEY_FILENAME=privkey.pem

|

||||

NGINX_ENABLE_CERTBOT_CHALLENGE=true

|

||||

CERTBOT_DOMAIN=your_domain.com

|

||||

CERTBOT_EMAIL=example@your_domain.com

|

||||

```

|

||||

execute command:

|

||||

```shell

|

||||

docker network prune

|

||||

docker compose --profile certbot up --force-recreate -d

|

||||

```

|

||||

then after the containers launched:

|

||||

```shell

|

||||

docker compose exec -it certbot /bin/sh /update-cert.sh

|

||||

```

|

||||

2. Edit `.env` file and `docker compose --profile certbot up` again.

|

||||

set `.env` value additionally

|

||||

```properties

|

||||

NGINX_HTTPS_ENABLED=true

|

||||

```

|

||||

execute command:

|

||||

```shell

|

||||

docker compose --profile certbot up -d --no-deps --force-recreate nginx

|

||||

```

|

||||

Then you can access your serve with HTTPS.

|

||||

[https://your_domain.com](https://your_domain.com)

|

||||

|

||||

## SSL certificates renewal

|

||||

|

||||

For SSL certificates renewal, execute commands below:

|

||||

|

||||

```shell

|

||||

docker compose exec -it certbot /bin/sh /update-cert.sh

|

||||

docker compose exec nginx nginx -s reload

|

||||

```

|

||||

|

||||

## Options for certbot

|

||||

|

||||

`CERTBOT_OPTIONS` key might be helpful for testing. i.e.,

|

||||

|

||||

```properties

|

||||

CERTBOT_OPTIONS=--dry-run

|

||||

```

|

||||

|

||||

To apply changes to `CERTBOT_OPTIONS`, regenerate the certbot container before updating the certificates.

|

||||

|

||||

```shell

|

||||

docker compose --profile certbot up -d --no-deps --force-recreate certbot

|

||||

docker compose exec -it certbot /bin/sh /update-cert.sh

|

||||

```

|

||||

|

||||

Then, reload the nginx container if necessary.

|

||||

|

||||

```shell

|

||||

docker compose exec nginx nginx -s reload

|

||||

```

|

||||

|

||||

## For legacy servers

|

||||

|

||||

To use cert files dir `nginx/ssl` as before, simply launch containers WITHOUT `--profile certbot` option.

|

||||

|

||||

```shell

|

||||

docker compose up -d

|

||||

```

|

||||

|

|

@ -0,0 +1,30 @@

|

|||

#!/bin/sh

|

||||

set -e

|

||||

|

||||

printf '%s\n' "Docker entrypoint script is running"

|

||||

|

||||

printf '%s\n' "\nChecking specific environment variables:"

|

||||

printf '%s\n' "CERTBOT_EMAIL: ${CERTBOT_EMAIL:-Not set}"

|

||||

printf '%s\n' "CERTBOT_DOMAIN: ${CERTBOT_DOMAIN:-Not set}"

|

||||

printf '%s\n' "CERTBOT_OPTIONS: ${CERTBOT_OPTIONS:-Not set}"

|

||||

|

||||

printf '%s\n' "\nChecking mounted directories:"

|

||||

for dir in "/etc/letsencrypt" "/var/www/html" "/var/log/letsencrypt"; do

|

||||

if [ -d "$dir" ]; then

|

||||

printf '%s\n' "$dir exists. Contents:"

|

||||

ls -la "$dir"

|

||||

else

|

||||

printf '%s\n' "$dir does not exist."

|

||||

fi

|

||||

done

|

||||

|

||||

printf '%s\n' "\nGenerating update-cert.sh from template"

|

||||

sed -e "s|\${CERTBOT_EMAIL}|$CERTBOT_EMAIL|g" \

|

||||

-e "s|\${CERTBOT_DOMAIN}|$CERTBOT_DOMAIN|g" \

|

||||

-e "s|\${CERTBOT_OPTIONS}|$CERTBOT_OPTIONS|g" \

|

||||

/update-cert.template.txt > /update-cert.sh

|

||||

|

||||

chmod +x /update-cert.sh

|

||||

|

||||

printf '%s\n' "\nExecuting command:" "$@"

|

||||

exec "$@"

|

||||

|

|

@ -0,0 +1,19 @@

|

|||

#!/bin/bash

|

||||

set -e

|

||||

|

||||

DOMAIN="${CERTBOT_DOMAIN}"

|

||||

EMAIL="${CERTBOT_EMAIL}"

|

||||

OPTIONS="${CERTBOT_OPTIONS}"

|

||||

CERT_NAME="${DOMAIN}" # 証明書名をドメイン名と同じにする

|

||||

|

||||

# Check if the certificate already exists

|

||||

if [ -f "/etc/letsencrypt/renewal/${CERT_NAME}.conf" ]; then

|

||||

echo "Certificate exists. Attempting to renew..."

|

||||

certbot renew --noninteractive --cert-name ${CERT_NAME} --webroot --webroot-path=/var/www/html --email ${EMAIL} --agree-tos --no-eff-email ${OPTIONS}

|

||||

else

|

||||

echo "Certificate does not exist. Obtaining a new certificate..."

|

||||

certbot certonly --noninteractive --webroot --webroot-path=/var/www/html --email ${EMAIL} --agree-tos --no-eff-email -d ${DOMAIN} ${OPTIONS}

|

||||

fi

|

||||

echo "Certificate operation successful"

|

||||

# Note: Nginx reload should be handled outside this container

|

||||

echo "Please ensure to reload Nginx to apply any certificate changes."

|

||||

|

|

@ -0,0 +1,4 @@

|

|||

FROM couchbase/server:latest AS stage_base

|

||||

# FROM couchbase:latest AS stage_base

|

||||

COPY init-cbserver.sh /opt/couchbase/init/

|

||||

RUN chmod +x /opt/couchbase/init/init-cbserver.sh

|

||||

|

|

@ -0,0 +1,44 @@

|

|||

#!/bin/bash

|

||||

# used to start couchbase server - can't get around this as docker compose only allows you to start one command - so we have to start couchbase like the standard couchbase Dockerfile would

|

||||

# https://github.com/couchbase/docker/blob/master/enterprise/couchbase-server/7.2.0/Dockerfile#L88

|

||||

|

||||

/entrypoint.sh couchbase-server &

|

||||

|

||||

# track if setup is complete so we don't try to setup again

|

||||

FILE=/opt/couchbase/init/setupComplete.txt

|

||||

|

||||

if ! [ -f "$FILE" ]; then

|

||||

# used to automatically create the cluster based on environment variables

|

||||

# https://docs.couchbase.com/server/current/cli/cbcli/couchbase-cli-cluster-init.html

|

||||

|

||||

echo $COUCHBASE_ADMINISTRATOR_USERNAME ":" $COUCHBASE_ADMINISTRATOR_PASSWORD

|

||||

|

||||

sleep 20s

|

||||

/opt/couchbase/bin/couchbase-cli cluster-init -c 127.0.0.1 \

|

||||

--cluster-username $COUCHBASE_ADMINISTRATOR_USERNAME \

|

||||

--cluster-password $COUCHBASE_ADMINISTRATOR_PASSWORD \

|

||||

--services data,index,query,fts \

|

||||

--cluster-ramsize $COUCHBASE_RAM_SIZE \

|

||||

--cluster-index-ramsize $COUCHBASE_INDEX_RAM_SIZE \

|

||||

--cluster-eventing-ramsize $COUCHBASE_EVENTING_RAM_SIZE \

|

||||

--cluster-fts-ramsize $COUCHBASE_FTS_RAM_SIZE \

|

||||

--index-storage-setting default

|

||||

|

||||

sleep 2s

|

||||

|

||||

# used to auto create the bucket based on environment variables

|

||||

# https://docs.couchbase.com/server/current/cli/cbcli/couchbase-cli-bucket-create.html

|

||||

|

||||

/opt/couchbase/bin/couchbase-cli bucket-create -c localhost:8091 \

|

||||

--username $COUCHBASE_ADMINISTRATOR_USERNAME \

|

||||

--password $COUCHBASE_ADMINISTRATOR_PASSWORD \

|

||||

--bucket $COUCHBASE_BUCKET \

|

||||

--bucket-ramsize $COUCHBASE_BUCKET_RAMSIZE \

|

||||

--bucket-type couchbase

|

||||

|

||||

# create file so we know that the cluster is setup and don't run the setup again

|

||||

touch $FILE

|

||||

fi

|

||||

# docker compose will stop the container from running unless we do this

|

||||

# known issue and workaround

|

||||

tail -f /dev/null

|

||||

|

|

@ -0,0 +1,25 @@

|

|||

#!/bin/bash

|

||||

|

||||

set -e

|

||||

|

||||

if [ "${VECTOR_STORE}" = "elasticsearch-ja" ]; then

|

||||

# Check if the ICU tokenizer plugin is installed

|

||||

if ! /usr/share/elasticsearch/bin/elasticsearch-plugin list | grep -q analysis-icu; then

|

||||

printf '%s\n' "Installing the ICU tokenizer plugin"

|

||||

if ! /usr/share/elasticsearch/bin/elasticsearch-plugin install analysis-icu; then

|

||||

printf '%s\n' "Failed to install the ICU tokenizer plugin"

|

||||

exit 1

|

||||

fi

|

||||

fi

|

||||

# Check if the Japanese language analyzer plugin is installed

|

||||

if ! /usr/share/elasticsearch/bin/elasticsearch-plugin list | grep -q analysis-kuromoji; then

|

||||

printf '%s\n' "Installing the Japanese language analyzer plugin"

|

||||

if ! /usr/share/elasticsearch/bin/elasticsearch-plugin install analysis-kuromoji; then

|

||||

printf '%s\n' "Failed to install the Japanese language analyzer plugin"

|

||||

exit 1

|

||||

fi

|

||||

fi

|

||||

fi

|

||||

|

||||

# Run the original entrypoint script

|

||||

exec /bin/tini -- /usr/local/bin/docker-entrypoint.sh

|

||||

|

|

@ -0,0 +1,48 @@

|

|||

# Please do not directly edit this file. Instead, modify the .env variables related to NGINX configuration.

|

||||

|

||||

server {

|

||||

listen ${NGINX_PORT};

|

||||

server_name ${NGINX_SERVER_NAME};

|

||||

|

||||

location /console/api {

|

||||

proxy_pass http://api:5001;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

location /api {

|

||||

proxy_pass http://api:5001;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

location /v1 {

|

||||

proxy_pass http://api:5001;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

location /files {

|

||||

proxy_pass http://api:5001;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

location /explore {

|

||||

proxy_pass http://web:3000;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

location /e/ {

|

||||

proxy_pass http://plugin_daemon:5002;

|

||||

proxy_set_header Dify-Hook-Url $scheme://$host$request_uri;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

location / {

|

||||

proxy_pass http://web:3000;

|

||||

include proxy.conf;

|

||||

}

|

||||

|

||||

# placeholder for acme challenge location

|

||||

${ACME_CHALLENGE_LOCATION}

|

||||

|

||||

# placeholder for https config defined in https.conf.template

|

||||

${HTTPS_CONFIG}

|

||||

}

|

||||

|

|

@ -0,0 +1,39 @@

|

|||

#!/bin/bash

|

||||

|

||||

if [ "${NGINX_HTTPS_ENABLED}" = "true" ]; then

|

||||

# Check if the certificate and key files for the specified domain exist

|

||||

if [ -n "${CERTBOT_DOMAIN}" ] && \

|

||||

[ -f "/etc/letsencrypt/live/${CERTBOT_DOMAIN}/${NGINX_SSL_CERT_FILENAME}" ] && \

|

||||

[ -f "/etc/letsencrypt/live/${CERTBOT_DOMAIN}/${NGINX_SSL_CERT_KEY_FILENAME}" ]; then

|

||||

SSL_CERTIFICATE_PATH="/etc/letsencrypt/live/${CERTBOT_DOMAIN}/${NGINX_SSL_CERT_FILENAME}"

|

||||

SSL_CERTIFICATE_KEY_PATH="/etc/letsencrypt/live/${CERTBOT_DOMAIN}/${NGINX_SSL_CERT_KEY_FILENAME}"

|

||||

else

|

||||

SSL_CERTIFICATE_PATH="/etc/ssl/${NGINX_SSL_CERT_FILENAME}"

|

||||

SSL_CERTIFICATE_KEY_PATH="/etc/ssl/${NGINX_SSL_CERT_KEY_FILENAME}"

|

||||

fi

|

||||

export SSL_CERTIFICATE_PATH

|

||||

export SSL_CERTIFICATE_KEY_PATH

|

||||

|

||||

# set the HTTPS_CONFIG environment variable to the content of the https.conf.template

|

||||

HTTPS_CONFIG=$(envsubst < /etc/nginx/https.conf.template)

|

||||

export HTTPS_CONFIG

|

||||

# Substitute the HTTPS_CONFIG in the default.conf.template with content from https.conf.template

|

||||

envsubst '${HTTPS_CONFIG}' < /etc/nginx/conf.d/default.conf.template > /etc/nginx/conf.d/default.conf

|

||||

fi

|

||||

|

||||

if [ "${NGINX_ENABLE_CERTBOT_CHALLENGE}" = "true" ]; then

|

||||

ACME_CHALLENGE_LOCATION='location /.well-known/acme-challenge/ { root /var/www/html; }'

|

||||

else

|

||||

ACME_CHALLENGE_LOCATION=''

|

||||

fi

|

||||

export ACME_CHALLENGE_LOCATION

|

||||

|

||||

env_vars=$(printenv | cut -d= -f1 | sed 's/^/$/g' | paste -sd, -)

|

||||

|

||||

envsubst "$env_vars" < /etc/nginx/nginx.conf.template > /etc/nginx/nginx.conf

|

||||

envsubst "$env_vars" < /etc/nginx/proxy.conf.template > /etc/nginx/proxy.conf

|

||||

|

||||

envsubst < /etc/nginx/conf.d/default.conf.template > /etc/nginx/conf.d/default.conf

|

||||

|

||||

# Start Nginx using the default entrypoint

|

||||

exec nginx -g 'daemon off;'

|

||||

|

|

@ -0,0 +1,9 @@

|

|||

# Please do not directly edit this file. Instead, modify the .env variables related to NGINX configuration.

|

||||

|

||||

listen ${NGINX_SSL_PORT} ssl;

|

||||

ssl_certificate ${SSL_CERTIFICATE_PATH};

|

||||

ssl_certificate_key ${SSL_CERTIFICATE_KEY_PATH};

|

||||

ssl_protocols ${NGINX_SSL_PROTOCOLS};

|

||||

ssl_prefer_server_ciphers on;

|

||||

ssl_session_cache shared:SSL:10m;

|

||||

ssl_session_timeout 10m;

|

||||

|

|

@ -0,0 +1,34 @@

|

|||

# Please do not directly edit this file. Instead, modify the .env variables related to NGINX configuration.

|

||||

|

||||

user nginx;

|

||||

worker_processes ${NGINX_WORKER_PROCESSES};

|

||||

|

||||

error_log /var/log/nginx/error.log notice;

|

||||

pid /var/run/nginx.pid;

|

||||

|

||||

|

||||

events {

|

||||

worker_connections 1024;

|

||||

}

|

||||

|

||||

|

||||

http {

|

||||

include /etc/nginx/mime.types;

|

||||

default_type application/octet-stream;

|

||||

|

||||

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

|

||||

'$status $body_bytes_sent "$http_referer" '

|

||||

'"$http_user_agent" "$http_x_forwarded_for"';

|

||||

|

||||

access_log /var/log/nginx/access.log main;

|

||||

|

||||

sendfile on;

|

||||

#tcp_nopush on;

|

||||

|

||||

keepalive_timeout ${NGINX_KEEPALIVE_TIMEOUT};

|

||||

|

||||

#gzip on;

|

||||

client_max_body_size ${NGINX_CLIENT_MAX_BODY_SIZE};

|

||||

|

||||

include /etc/nginx/conf.d/*.conf;

|

||||

}

|

||||

|

|

@ -0,0 +1,11 @@

|

|||

# Please do not directly edit this file. Instead, modify the .env variables related to NGINX configuration.

|

||||

|

||||

proxy_set_header Host $host;

|

||||

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

|

||||

proxy_set_header X-Forwarded-Proto $scheme;

|

||||

proxy_set_header X-Forwarded-Port $server_port;

|

||||

proxy_http_version 1.1;

|

||||

proxy_set_header Connection "";

|

||||

proxy_buffering off;

|

||||

proxy_read_timeout ${NGINX_PROXY_READ_TIMEOUT};

|

||||

proxy_send_timeout ${NGINX_PROXY_SEND_TIMEOUT};

|

||||

|

|

@ -0,0 +1,42 @@

|

|||

#!/bin/bash

|

||||

|

||||

# Modified based on Squid OCI image entrypoint

|

||||

|

||||

# This entrypoint aims to forward the squid logs to stdout to assist users of

|

||||

# common container related tooling (e.g., kubernetes, docker-compose, etc) to

|

||||

# access the service logs.

|

||||

|

||||

# Moreover, it invokes the squid binary, leaving all the desired parameters to

|

||||

# be provided by the "command" passed to the spawned container. If no command

|

||||

# is provided by the user, the default behavior (as per the CMD statement in

|

||||

# the Dockerfile) will be to use Ubuntu's default configuration [1] and run

|

||||

# squid with the "-NYC" options to mimic the behavior of the Ubuntu provided

|

||||

# systemd unit.

|

||||

|

||||

# [1] The default configuration is changed in the Dockerfile to allow local

|

||||

# network connections. See the Dockerfile for further information.

|

||||

|

||||

echo "[ENTRYPOINT] re-create snakeoil self-signed certificate removed in the build process"

|

||||

if [ ! -f /etc/ssl/private/ssl-cert-snakeoil.key ]; then

|

||||

/usr/sbin/make-ssl-cert generate-default-snakeoil --force-overwrite > /dev/null 2>&1

|

||||

fi

|

||||

|

||||

tail -F /var/log/squid/access.log 2>/dev/null &

|

||||

tail -F /var/log/squid/error.log 2>/dev/null &

|

||||

tail -F /var/log/squid/store.log 2>/dev/null &

|

||||

tail -F /var/log/squid/cache.log 2>/dev/null &

|

||||

|

||||

# Replace environment variables in the template and output to the squid.conf

|

||||

echo "[ENTRYPOINT] replacing environment variables in the template"

|

||||

awk '{

|

||||

while(match($0, /\${[A-Za-z_][A-Za-z_0-9]*}/)) {

|

||||

var = substr($0, RSTART+2, RLENGTH-3)

|

||||

val = ENVIRON[var]

|

||||

$0 = substr($0, 1, RSTART-1) val substr($0, RSTART+RLENGTH)

|

||||

}

|

||||

print

|

||||

}' /etc/squid/squid.conf.template > /etc/squid/squid.conf

|

||||

|

||||

/usr/sbin/squid -Nz

|

||||

echo "[ENTRYPOINT] starting squid"

|

||||

/usr/sbin/squid -f /etc/squid/squid.conf -NYC 1

|

||||

|

|

@ -0,0 +1,51 @@

|

|||

acl localnet src 0.0.0.1-0.255.255.255 # RFC 1122 "this" network (LAN)

|

||||

acl localnet src 10.0.0.0/8 # RFC 1918 local private network (LAN)

|

||||

acl localnet src 100.64.0.0/10 # RFC 6598 shared address space (CGN)

|

||||

acl localnet src 169.254.0.0/16 # RFC 3927 link-local (directly plugged) machines

|

||||

acl localnet src 172.16.0.0/12 # RFC 1918 local private network (LAN)

|

||||

acl localnet src 192.168.0.0/16 # RFC 1918 local private network (LAN)

|

||||

acl localnet src fc00::/7 # RFC 4193 local private network range

|

||||

acl localnet src fe80::/10 # RFC 4291 link-local (directly plugged) machines

|

||||

acl SSL_ports port 443

|

||||

# acl SSL_ports port 1025-65535 # Enable the configuration to resolve this issue: https://github.com/langgenius/dify/issues/12792

|

||||

acl Safe_ports port 80 # http

|

||||

acl Safe_ports port 21 # ftp

|

||||

acl Safe_ports port 443 # https

|

||||

acl Safe_ports port 70 # gopher

|

||||

acl Safe_ports port 210 # wais

|

||||

acl Safe_ports port 1025-65535 # unregistered ports

|

||||

acl Safe_ports port 280 # http-mgmt

|

||||

acl Safe_ports port 488 # gss-http

|

||||

acl Safe_ports port 591 # filemaker

|

||||

acl Safe_ports port 777 # multiling http

|

||||

acl CONNECT method CONNECT

|

||||

http_access deny !Safe_ports

|

||||

http_access deny CONNECT !SSL_ports

|

||||

http_access allow localhost manager

|

||||

http_access deny manager

|

||||

http_access allow localhost

|

||||

include /etc/squid/conf.d/*.conf

|

||||

http_access deny all

|

||||

|

||||

################################## Proxy Server ################################

|

||||

http_port ${HTTP_PORT}

|

||||

coredump_dir ${COREDUMP_DIR}

|

||||

refresh_pattern ^ftp: 1440 20% 10080

|

||||

refresh_pattern ^gopher: 1440 0% 1440

|

||||

refresh_pattern -i (/cgi-bin/|\?) 0 0% 0

|

||||

refresh_pattern \/(Packages|Sources)(|\.bz2|\.gz|\.xz)$ 0 0% 0 refresh-ims

|

||||

refresh_pattern \/Release(|\.gpg)$ 0 0% 0 refresh-ims

|

||||

refresh_pattern \/InRelease$ 0 0% 0 refresh-ims

|

||||

refresh_pattern \/(Translation-.*)(|\.bz2|\.gz|\.xz)$ 0 0% 0 refresh-ims

|

||||

refresh_pattern . 0 20% 4320

|

||||

|

||||

|

||||

# cache_dir ufs /var/spool/squid 100 16 256

|

||||

# upstream proxy, set to your own upstream proxy IP to avoid SSRF attacks

|

||||

# cache_peer 172.1.1.1 parent 3128 0 no-query no-digest no-netdb-exchange default

|

||||

|

||||

################################## Reverse Proxy To Sandbox ################################

|

||||

http_port ${REVERSE_PROXY_PORT} accel vhost

|

||||

cache_peer ${SANDBOX_HOST} parent ${SANDBOX_PORT} 0 no-query originserver

|

||||

acl src_all src all

|

||||

http_access allow src_all

|

||||

|

|

@ -0,0 +1,13 @@

|

|||

#!/usr/bin/env bash

|

||||

|

||||

DB_INITIALIZED="/opt/oracle/oradata/dbinit"

|

||||

#[ -f ${DB_INITIALIZED} ] && exit

|

||||

#touch ${DB_INITIALIZED}

|

||||

if [ -f ${DB_INITIALIZED} ]; then

|

||||

echo 'File exists. Standards for have been Init'

|

||||

exit

|

||||

else

|

||||

echo 'File does not exist. Standards for first time Start up this DB'

|

||||

"$ORACLE_HOME"/bin/sqlplus -s "/ as sysdba" @"/opt/oracle/scripts/startup/init_user.script";

|

||||

touch ${DB_INITIALIZED}

|

||||

fi

|

||||

|

|

@ -0,0 +1,10 @@

|

|||

show pdbs;

|

||||

ALTER SYSTEM SET PROCESSES=500 SCOPE=SPFILE;

|

||||

alter session set container= freepdb1;

|

||||

create user dify identified by dify DEFAULT TABLESPACE users quota unlimited on users;

|

||||

grant DB_DEVELOPER_ROLE to dify;

|

||||

|

||||

BEGIN

|

||||

CTX_DDL.CREATE_PREFERENCE('my_chinese_vgram_lexer','CHINESE_VGRAM_LEXER');

|

||||

END;

|

||||

/

|

||||

|

|

@ -0,0 +1,4 @@

|

|||

# PD Configuration File reference:

|

||||

# https://docs.pingcap.com/tidb/stable/pd-configuration-file#pd-configuration-file

|

||||

[replication]

|

||||

max-replicas = 1

|

||||

|

|

@ -0,0 +1,13 @@

|

|||

# TiFlash tiflash-learner.toml Configuration File reference:

|

||||

# https://docs.pingcap.com/tidb/stable/tiflash-configuration#configure-the-tiflash-learnertoml-file

|

||||

|

||||

log-file = "/logs/tiflash_tikv.log"

|

||||

|

||||

[server]

|

||||

engine-addr = "tiflash:4030"

|

||||

addr = "0.0.0.0:20280"

|

||||

advertise-addr = "tiflash:20280"

|

||||

status-addr = "tiflash:20292"

|

||||

|

||||

[storage]

|

||||

data-dir = "/data/flash"

|

||||

|

|

@ -0,0 +1,19 @@

|

|||

# TiFlash tiflash.toml Configuration File reference:

|

||||

# https://docs.pingcap.com/tidb/stable/tiflash-configuration#configure-the-tiflashtoml-file

|

||||

|

||||

listen_host = "0.0.0.0"

|

||||

path = "/data"

|

||||

|

||||

[flash]

|

||||

tidb_status_addr = "tidb:10080"

|

||||

service_addr = "tiflash:4030"

|

||||

|

||||

[flash.proxy]

|

||||

config = "/tiflash-learner.toml"

|

||||

|

||||

[logger]

|

||||

errorlog = "/logs/tiflash_error.log"

|

||||

log = "/logs/tiflash.log"

|

||||

|

||||

[raft]

|

||||

pd_addr = "pd0:2379"

|

||||

|

|

@ -0,0 +1,62 @@

|

|||

services:

|

||||

pd0:

|

||||

image: pingcap/pd:v8.5.1

|

||||

# ports:

|

||||

# - "2379"

|

||||

volumes:

|

||||

- ./config/pd.toml:/pd.toml:ro

|

||||

- ./volumes/data:/data

|

||||

- ./volumes/logs:/logs

|

||||

command:

|

||||

- --name=pd0

|

||||

- --client-urls=http://0.0.0.0:2379

|

||||

- --peer-urls=http://0.0.0.0:2380

|

||||

- --advertise-client-urls=http://pd0:2379

|

||||

- --advertise-peer-urls=http://pd0:2380

|

||||

- --initial-cluster=pd0=http://pd0:2380

|

||||

- --data-dir=/data/pd

|

||||

- --config=/pd.toml

|

||||

- --log-file=/logs/pd.log

|

||||

restart: on-failure

|

||||

tikv:

|

||||

image: pingcap/tikv:v8.5.1

|

||||

volumes:

|

||||

- ./volumes/data:/data

|

||||

- ./volumes/logs:/logs

|

||||

command:

|

||||

- --addr=0.0.0.0:20160

|

||||

- --advertise-addr=tikv:20160

|

||||

- --status-addr=tikv:20180

|

||||

- --data-dir=/data/tikv

|

||||

- --pd=pd0:2379

|

||||

- --log-file=/logs/tikv.log

|

||||

depends_on:

|

||||

- "pd0"

|

||||

restart: on-failure

|

||||

tidb:

|

||||

image: pingcap/tidb:v8.5.1

|

||||

# ports:

|

||||

# - "4000:4000"

|

||||

volumes:

|

||||

- ./volumes/logs:/logs

|

||||

command:

|

||||

- --advertise-address=tidb

|

||||

- --store=tikv

|

||||

- --path=pd0:2379

|

||||

- --log-file=/logs/tidb.log

|

||||

depends_on:

|

||||

- "tikv"

|

||||

restart: on-failure

|

||||

tiflash:

|

||||

image: pingcap/tiflash:v8.5.1

|

||||

volumes:

|

||||

- ./config/tiflash.toml:/tiflash.toml:ro

|

||||

- ./config/tiflash-learner.toml:/tiflash-learner.toml:ro

|

||||

- ./volumes/data:/data

|

||||

- ./volumes/logs:/logs

|

||||

command:

|

||||

- --config=/tiflash.toml

|

||||

depends_on:

|

||||

- "tikv"

|

||||

- "tidb"

|

||||

restart: on-failure

|

||||

|

|

@ -0,0 +1,17 @@

|

|||

<clickhouse>

|

||||

<users>

|

||||

<default>

|

||||

<password></password>

|

||||

<networks>

|

||||

<ip>::1</ip> <!-- change to ::/0 to allow access from all addresses -->

|

||||

<ip>127.0.0.1</ip>

|

||||

<ip>10.0.0.0/8</ip>

|

||||

<ip>172.16.0.0/12</ip>

|

||||

<ip>192.168.0.0/16</ip>

|

||||

</networks>

|

||||

<profile>default</profile>

|

||||

<quota>default</quota>

|

||||

<access_management>1</access_management>

|

||||

</default>

|

||||

</users>

|

||||

</clickhouse>

|

||||

|

|

@ -0,0 +1 @@

|

|||

ALTER SYSTEM SET ob_vector_memory_limit_percentage = 30;

|

||||

|

|

@ -0,0 +1,222 @@

|

|||

---

|

||||

# Copyright OpenSearch Contributors

|

||||

# SPDX-License-Identifier: Apache-2.0

|

||||

|

||||

# Description:

|

||||

# Default configuration for OpenSearch Dashboards

|

||||

|

||||

# OpenSearch Dashboards is served by a back end server. This setting specifies the port to use.

|

||||

# server.port: 5601

|

||||

|

||||

# Specifies the address to which the OpenSearch Dashboards server will bind. IP addresses and host names are both valid values.

|

||||

# The default is 'localhost', which usually means remote machines will not be able to connect.

|

||||

# To allow connections from remote users, set this parameter to a non-loopback address.

|

||||

# server.host: "localhost"

|

||||

|

||||

# Enables you to specify a path to mount OpenSearch Dashboards at if you are running behind a proxy.

|

||||

# Use the `server.rewriteBasePath` setting to tell OpenSearch Dashboards if it should remove the basePath

|

||||

# from requests it receives, and to prevent a deprecation warning at startup.

|

||||

# This setting cannot end in a slash.

|

||||

# server.basePath: ""

|

||||

|

||||

# Specifies whether OpenSearch Dashboards should rewrite requests that are prefixed with

|

||||

# `server.basePath` or require that they are rewritten by your reverse proxy.

|

||||

# server.rewriteBasePath: false

|

||||

|

||||

# The maximum payload size in bytes for incoming server requests.

|

||||

# server.maxPayloadBytes: 1048576

|

||||

|

||||

# The OpenSearch Dashboards server's name. This is used for display purposes.

|

||||

# server.name: "your-hostname"

|

||||

|

||||

# The URLs of the OpenSearch instances to use for all your queries.

|

||||

# opensearch.hosts: ["http://localhost:9200"]

|

||||

|

||||

# OpenSearch Dashboards uses an index in OpenSearch to store saved searches, visualizations and

|

||||

# dashboards. OpenSearch Dashboards creates a new index if the index doesn't already exist.

|

||||

# opensearchDashboards.index: ".opensearch_dashboards"

|

||||

|

||||

# The default application to load.

|

||||

# opensearchDashboards.defaultAppId: "home"

|

||||

|

||||

# Setting for an optimized healthcheck that only uses the local OpenSearch node to do Dashboards healthcheck.

|

||||

# This settings should be used for large clusters or for clusters with ingest heavy nodes.

|

||||

# It allows Dashboards to only healthcheck using the local OpenSearch node rather than fan out requests across all nodes.

|

||||

#

|

||||

# It requires the user to create an OpenSearch node attribute with the same name as the value used in the setting

|

||||

# This node attribute should assign all nodes of the same cluster an integer value that increments with each new cluster that is spun up

|

||||

# e.g. in opensearch.yml file you would set the value to a setting using node.attr.cluster_id:

|

||||

# Should only be enabled if there is a corresponding node attribute created in your OpenSearch config that matches the value here

|

||||

# opensearch.optimizedHealthcheckId: "cluster_id"

|

||||

|

||||

# If your OpenSearch is protected with basic authentication, these settings provide

|

||||

# the username and password that the OpenSearch Dashboards server uses to perform maintenance on the OpenSearch Dashboards

|

||||

# index at startup. Your OpenSearch Dashboards users still need to authenticate with OpenSearch, which

|

||||

# is proxied through the OpenSearch Dashboards server.

|

||||

# opensearch.username: "opensearch_dashboards_system"

|

||||

# opensearch.password: "pass"

|

||||

|

||||

# Enables SSL and paths to the PEM-format SSL certificate and SSL key files, respectively.

|

||||

# These settings enable SSL for outgoing requests from the OpenSearch Dashboards server to the browser.

|

||||

# server.ssl.enabled: false

|

||||

# server.ssl.certificate: /path/to/your/server.crt

|

||||

# server.ssl.key: /path/to/your/server.key

|

||||

|

||||

# Optional settings that provide the paths to the PEM-format SSL certificate and key files.

|

||||

# These files are used to verify the identity of OpenSearch Dashboards to OpenSearch and are required when

|

||||

# xpack.security.http.ssl.client_authentication in OpenSearch is set to required.

|

||||

# opensearch.ssl.certificate: /path/to/your/client.crt

|

||||

# opensearch.ssl.key: /path/to/your/client.key

|

||||

|

||||

# Optional setting that enables you to specify a path to the PEM file for the certificate

|

||||

# authority for your OpenSearch instance.

|

||||

# opensearch.ssl.certificateAuthorities: [ "/path/to/your/CA.pem" ]

|

||||

|

||||

# To disregard the validity of SSL certificates, change this setting's value to 'none'.

|

||||

# opensearch.ssl.verificationMode: full

|

||||

|

||||

# Time in milliseconds to wait for OpenSearch to respond to pings. Defaults to the value of

|

||||

# the opensearch.requestTimeout setting.

|

||||

# opensearch.pingTimeout: 1500

|

||||

|

||||

# Time in milliseconds to wait for responses from the back end or OpenSearch. This value

|

||||

# must be a positive integer.

|

||||

# opensearch.requestTimeout: 30000

|

||||

|

||||

# List of OpenSearch Dashboards client-side headers to send to OpenSearch. To send *no* client-side

|

||||

# headers, set this value to [] (an empty list).

|

||||

# opensearch.requestHeadersWhitelist: [ authorization ]

|

||||

|

||||

# Header names and values that are sent to OpenSearch. Any custom headers cannot be overwritten

|

||||

# by client-side headers, regardless of the opensearch.requestHeadersWhitelist configuration.

|

||||

# opensearch.customHeaders: {}

|

||||

|

||||

# Time in milliseconds for OpenSearch to wait for responses from shards. Set to 0 to disable.

|

||||

# opensearch.shardTimeout: 30000

|

||||

|

||||

# Logs queries sent to OpenSearch. Requires logging.verbose set to true.

|

||||

# opensearch.logQueries: false

|

||||

|

||||

# Specifies the path where OpenSearch Dashboards creates the process ID file.

|

||||

# pid.file: /var/run/opensearchDashboards.pid

|

||||

|

||||

# Enables you to specify a file where OpenSearch Dashboards stores log output.

|

||||

# logging.dest: stdout

|

||||

|

||||

# Set the value of this setting to true to suppress all logging output.

|

||||

# logging.silent: false

|

||||

|

||||

# Set the value of this setting to true to suppress all logging output other than error messages.

|

||||

# logging.quiet: false

|

||||

|

||||

# Set the value of this setting to true to log all events, including system usage information

|

||||

# and all requests.

|

||||

# logging.verbose: false

|

||||

|

||||

# Set the interval in milliseconds to sample system and process performance

|

||||

# metrics. Minimum is 100ms. Defaults to 5000.

|

||||

# ops.interval: 5000

|

||||

|

||||

# Specifies locale to be used for all localizable strings, dates and number formats.

|

||||

# Supported languages are the following: English - en , by default , Chinese - zh-CN .

|

||||

# i18n.locale: "en"

|

||||

|

||||

# Set the allowlist to check input graphite Url. Allowlist is the default check list.

|

||||

# vis_type_timeline.graphiteAllowedUrls: ['https://www.hostedgraphite.com/UID/ACCESS_KEY/graphite']

|

||||

|

||||

# Set the blocklist to check input graphite Url. Blocklist is an IP list.

|

||||

# Below is an example for reference

|

||||

# vis_type_timeline.graphiteBlockedIPs: [

|

||||

# //Loopback

|

||||

# '127.0.0.0/8',

|

||||

# '::1/128',

|

||||

# //Link-local Address for IPv6

|

||||

# 'fe80::/10',

|

||||

# //Private IP address for IPv4

|

||||

# '10.0.0.0/8',

|

||||

# '172.16.0.0/12',

|

||||

# '192.168.0.0/16',

|

||||

# //Unique local address (ULA)

|

||||

# 'fc00::/7',

|

||||

# //Reserved IP address

|

||||

# '0.0.0.0/8',

|

||||

# '100.64.0.0/10',

|

||||

# '192.0.0.0/24',

|

||||

# '192.0.2.0/24',

|

||||

# '198.18.0.0/15',

|

||||

# '192.88.99.0/24',

|

||||

# '198.51.100.0/24',

|

||||

# '203.0.113.0/24',

|

||||

# '224.0.0.0/4',

|

||||

# '240.0.0.0/4',

|

||||

# '255.255.255.255/32',

|

||||

# '::/128',

|

||||

# '2001:db8::/32',

|

||||

# 'ff00::/8',

|

||||

# ]

|

||||

# vis_type_timeline.graphiteBlockedIPs: []

|

||||

|

||||

# opensearchDashboards.branding:

|

||||

# logo:

|

||||

# defaultUrl: ""

|

||||

# darkModeUrl: ""

|

||||

# mark:

|

||||

# defaultUrl: ""

|

||||

# darkModeUrl: ""

|

||||

# loadingLogo:

|

||||

# defaultUrl: ""

|

||||

# darkModeUrl: ""

|

||||

# faviconUrl: ""

|

||||